Guideline impact

Determining what measures to use are important, but equally important is to know how to use the data to tell a story.

Guideline impact

Guidelines represent a significant investment of funds and volunteer labour in an effort to improve health outcomes. Demonstrating that these efforts have made a difference is in the interests of all patients, users, developers and funders (Ioannidis, Greenland et al. 2014).

Different people will be interested in different outcomes and, consistent with guideline development principles, consumer involvement and stakeholder consultation are essential to determine what the right questions are and what outcomes matter most to different people.

Determining what indicators or metrics to use is important, but just as important is to know how to use the data to tell a story about impact.

Among the biggest challenges to measuring impact is a lack of consensus on what ‘impact’ really means, and how to identify and select important outcomes to be measures of impact.

NHMRC defines the impact of research as the verifiable outcomes that research makes to knowledge, health, the economy and/or society, and not the prospective or anticipated effects of the research. It is the effect of the research after it has been adopted, or adapted for use, or used to inform further research.

In the context of health guidelines, impact can similarly be defined as the verifiable outcomes that guideline recommendations make to behaviour, health, well-being, knowledge, the economy and/or society. The key term is the word ‘verifiable’, which indicates there should be a metric or an assessment that is associated with an outcome. While ideas to measure impact can be discussed and documented during the development of the guideline (and in some situations data collection can begin), it is not until after the release of guideline recommendations that the impact of the published guideline can be measured.

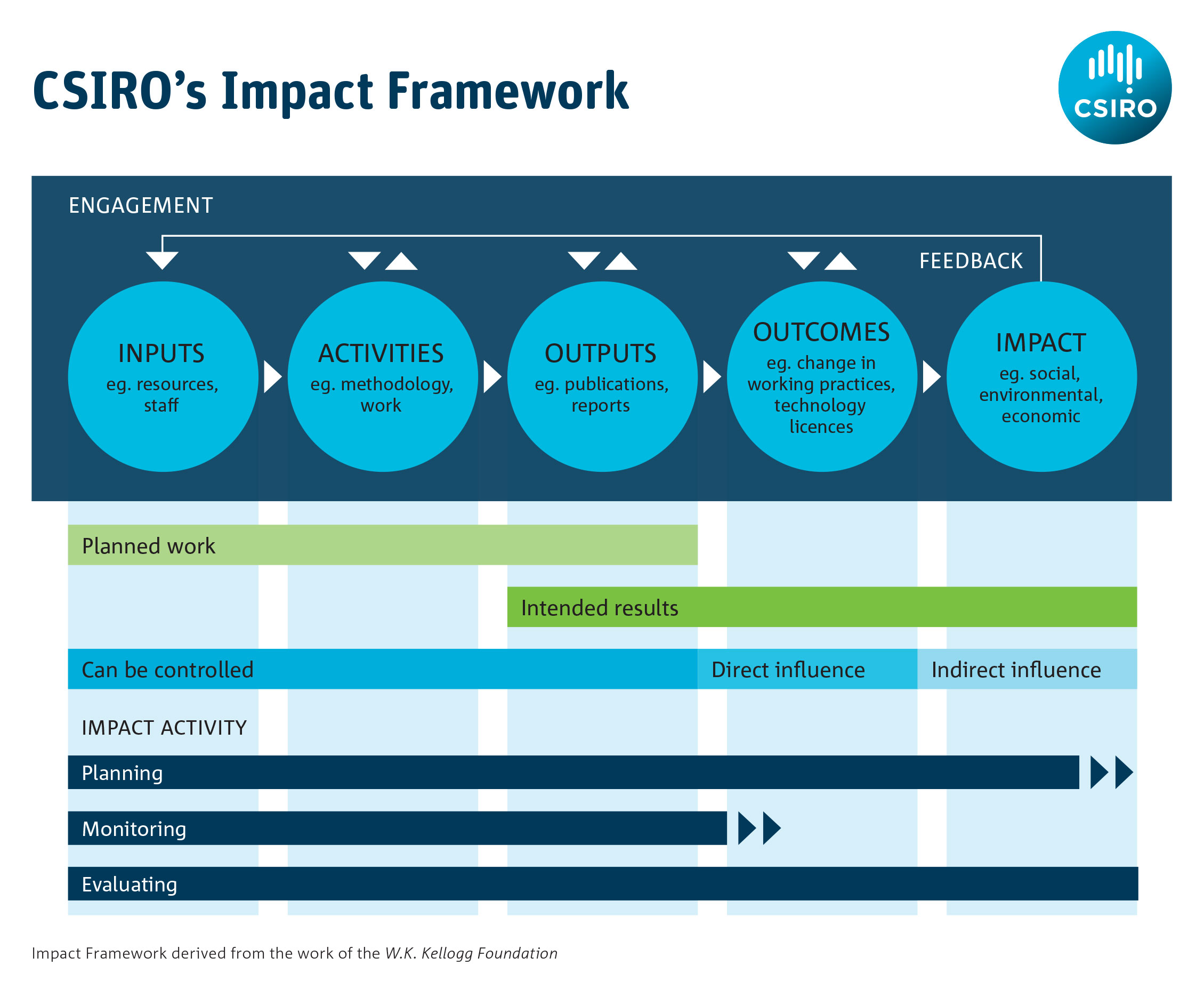

It is helpful to use a structured process such as a logic model for impact assessment as this can help to articulate what may be measured at each stage of the journey from inputs to impacts. It also helps identify what data might be available to demonstrate impact, since the development of a logic model requires some thought as to the various forms that impact might take. Australia’s CSIRO has published guidance on how to use logic models to measure impact: its impact management framework is shown in Figure 1.

What to do

1. Start an impact strategy as the guideline is being developed

Put an impact strategy in place at the beginning of the development process, even though it may change later. Documenting impact at the outset can help to:

- stay within scope

- establish systems to track impact

- assess impact.

The key to any strategy is to remind yourself why do you need to measure this and what do you think success would look like?

Logic models are an important tool to inform an impact strategy, and they provide a systematic and visual pathway to link the causal steps between the activities and the outcomes you want to achieve (Mills, 2019). One example is the use of a logic model framework in stroke research (Ramanathan, Reeves et al. 2018) to capture the processes, outputs and impacts involved for specific research streams. These measures will then be combined with a narrative and presented in a ‘scorecard’ that serves as a practical way to communicate research findings.

Your impact strategy should address:

- how success should be defined

- what outcomes you will measure

- how you determined these outcomes

- the time points when these outcomes will be measured

- what baseline indicators should be used

- what comparators will be used (if relevant)

- why they should be measured

- the resources required to measure these outcomes

- how you will monitor and review reporting of these outcomes

- what message you want to convey to the public or to specific groups.

You will also need to think about the more practical elements of project management such as:

- the resources that are available to you (funding, expertise and tools)

- engagement with the people and organisations that will use the guideline – will they collaborate with you to conduct these activities and champion the work?

- how long it will take.

As the guideline is developed, new information may come to light or priorities may change. Indicators can always be re-evaluated at the guideline planning stage; however once measurement starts after the guideline is published you will have less flexibility to make adjustments.

Undertaking a trial evaluation using early or ‘dummy’ data is a good quality check which can help to ensure that the data being collected will be suitable for evaluation following the release of the guideline. Once these measurable outcomes have been identified and laid out in a theory of change or logic model framework (Kneale, Thomas et al. 2015), it is the impact narrative that joins them together.

A list of guidelines where impact strategies have been used are listed in the Useful Resources section of this module.

2. Document what a successful guideline would look like

Documenting in advance what a successful guideline would look like is an important part of controlling its scope and guiding the decisions you make throughout the development process. It would typically be documented in the purpose and aims section of your guideline.

While most people would agree that improving patient care, improving health outcomes or preventing disease are the ultimate goals of a guideline, the hard part is proving you successfully achieved these outcomes. Ask yourself why is it important to demonstrate impact, and to whom must it be demonstrated? Then you can start to unpack what outcomes and indicators could look like. Of course, this will be refined along the way, but if you have the ability to track data from the very beginning, for instance through a clinical registry, it can help with refining the types of data most relevant to showing impact. Another advantage of doing this is that it can provide control data for pre- and post-guideline comparisons.

Success will mean different things to different people. Guideline developers surveyed by NHMRC reported that guideline success could be:

- improved health outcomes as a result of implementing guideline recommendations

- reduced harms (e.g. medication, alcohol use or risky behaviours)

- more efficient use of health resources

- an influence on clinical practice for clinicians and consumers

- a change in policy, clinical practice or research priorities

- demonstrating broad reach and uptake (such as where guidelines are used nationally and internationally, or have been adapted for use by other organisations).

Measuring impact is about demonstrating whether your efforts have made a difference. The earlier you decide what success looks like, it will be easier to determine what should be measured. Deciding which outcomes you want to track or have the capacity to track is where the challenge lies.

For example in the National Blood Authority’s 2013-2016 Strategic Plan success is described as ‘the availability of blood and blood products in the right quantities, at the right time and in the right place.’ In this context success could be demonstrated by data showing that wastage of blood products has been minimized, or transfusion–transmitted infections have been reduced.

3. Decide what outcomes will demonstrate guideline impact

Most guideline projects start with the overall aim of improving individual or population health outcomes. Commonly there are secondary aims such as enhancing clinician confidence in their practice, improving the cost-effectiveness of care or highlighting research gaps. It is likely there will be a gradation of outcomes to consider from an individual level to a health system level, so it is helpful to outline what an ideal scenario could look like with data. For example, what data would verify that your guidelines had produced impact? The ideal scenario should be developed with consumers and users to ensure the critical outcomes and inputs are explored (Armstrong, Mullins et al. 2018).

This exercise will also help reveal whether there are likely to be fundamental obstacles to demonstrating guideline impact.

For example, when the Australian Dietary Guidelines were released in 2013 (NHMRC 2013), an expected outcome might have been the improvement of the health of Australians by their eating healthy food. What would be the ideal data sources needed to answer whether Australians are eating healthier diets? How could you determine what part the Australian Dietary Guidelines played in this? It is important to consider attribution of the impact of a guideline. The Australian Commonwealth Scientific and Industrial Research Organisation (CSIRO) defines attribution as: ascription of a causal link between observed or expected to be observed changes and a specific intervention. It represents the extent to which observed development effects can be attributed to a specific intervention or to the performance of one or more partners taking account of other interventions (or factors) (CSIRO 2015).

For guidelines it is necessary to acknowledge those outcomes that are important but are too difficult or impractical to measure, and then to focus on more achievable outcomes that could be selected to measure instead.

It should also be recognised that in time there may be future opportunities or changing circumstances that could allow these outcomes to be measured. Acknowledging them is an important way to alert future funders, researchers or guideline developers to this possibility.

Intended impacts should be documented alongside the description of the scope. Discussions and decisions that lead to the selection of impact measures should be recorded throughout the guideline development process, including any information collected from stakeholders.

4. Select specific outcomes that are measurable

Consider using a conceptual framework to help determine what you can reasonably expect to achieve. The sphere of influence framework is a useful concept to determine what is and what is not within your power to influence (Tilley 2018).

There are many useful ideas for metrics that can be used to guide impact. The RAND Corporation has published an ‘ideas book’ that details 100 metrics that can be used in a number of domains (Guthrie, Krapels et al. 2016). Metrics used to evaluate use and awareness are relatively easy to source and can be managed in the short term (upon publication of your guideline and on an ongoing basis) to assess basic outcomes. These include:

- document downloads

- Google analytics

- citations in journal articles, government reports, clinical documents, at conferences

- media or news articles

- Twitter analytics.

These data are useful to track over time and to monitor for certain events but they are unlikely to provide a very detailed picture of whether or not the guideline is having an impact on important outcomes.

Efforts to measure guideline impact in the medium term (perhaps a year after publication and onwards) have focussed predominantly on knowledge, guideline adherence, or compliance with guideline recommendations (Heinemann, Roth et al. 2003, Horning, Hoehns et al. 2007, Bolton 2018), the implication being this behaviour will lead to better outcomes. Depending on the topic of the guideline it may also be possible to monitor changes in resource use (such as requests for tests and treatments (Lamb 2012), rates of referral (Inderjeeth 2009) or prescribing practices). This level of monitoring will likely require dedicated funding and a team, including researchers, to investigate the outcomes.

Collection methods may involve:

- surveys of knowledge or acceptability

- pre- and post-test comparisons

- audits

- collecting resource usage data.

Demonstrating improved health outcomes with data can take many years to achieve (Cadilhac, Andrew et al. 2017). These longer term outcomes and impacts will most likely be assessed by teams of researchers, economists or epidemiologists who might look at clinical indicators, prescribing practices, or the economic impacts of recommendations. National registry data has become an important tool to ensure there is a consistent data set over time to be able to track health outcomes over a long period of time. In one such example data collected through the National Stroke Registry feeds into the ongoing cycle and evaluation of recommendations for the Clinical Guidelines for Stroke Management.

5. Look for data that are available to use

Data can come from many different sources. You might find that there is data or information available that you did not originally intend collecting which later becomes relevant to telling your story.

When developing recommendations, the GRADE Evidence to Decision framework can provide a structured opportunity for the guideline development group to consider data and measurement needs for each recommendation. Surveys also present a low-cost method to obtain baseline data that can be repeated at specified intervals.

You can look for data that are already available to use through:

- publicly available sources and reports such as the Australian Government’s www.data.gov.au, and those produced by the Australian Bureau of Statistics, the Australian Institute of Health and Welfare, Medicare, Pharmaceutical Benefits Scheme (PBS) or Medicare Benefits Schedule (MBS)

- prescription data available through National Prescribing Service

- the Australian Commission on Safety and Quality in Health Care’s Atlas of Healthcare Variation

- citations or references to the guidelines in journal articles

- references to the guidelines in 'grey literature' such as websites, conference proceedings or online public documents

- website visitor and download metrics

- social media metrics.

Monitoring citations or using web analytics is the most common approach to collecting user data on guidelines, but may not be very useful in describing what is happening in practice.

Consider how you can use data to:

- evaluate guideline dissemination activities

- assess whether current clinical practice conforms to guideline recommendations

- assess whether health outcomes have changed or whether the guidelines have contributed to changes in clinical practice or health outcomes

- assess the guidelines’ impact on consumers’ knowledge and understanding

6. Tell an impact story

Begin to construct a story with the information you collect as this will become an important communication tool throughout the life of your guideline.

Think about the different audiences you want to communicate to, such as funders, clinicians, users, the public or patients, and try to anticipate questions they will ask.

Do not try to present a large collection of facts, but instead focus on context, outlining the work involved and the likely benefits of this work in a narrative summary to accompany the data.

An example is the NHMRC case study on tuberculosis control in Australia. The data used to construct this case study were sourced from NHMRC’s internal historical records, a range of documents in the public domain (including a PhD thesis), a number of published monographs, and statistics provided by the Australian Institute of Health and Welfare.

An impact story does not have to be perfect, it simply needs to be communicated. You may never be able to demonstrate that it was the guideline that made the particular difference, but you can speak about the part you think it played. That is, while you may have difficulties with attribution (how much of the impact that can be claimed by the guideline), you should not have difficulties with contribution (showing that the guidelines contributed in some way to the ultimate impact).

High quality and accessible data, such as a national registry of health indicators tracked over time, can certainly help, but creatively make the best use of the data that is available to you.

Case studies are just one example of tools to summarise the lessons learnt in assessing impact. NHMRC case studies demonstrate that outcomes and impact can take many years, and the combined work of many people and organisations, to generate.

Resources

RAND Corporation (2016) 100 Metrics to Assess and Communicate the Value of Biomedical Research: An Ideas Book ’

CSIRO (2015). Impact Evaluation Guide. Commonwealth Scientific and Industrial Research Organisation.

Centre for Epidemiology and Evidence. Developing and Using Program Logic: A Guide. Evidence and Evaluation Guidance Series, Population and Public Health Division. Sydney: NSW Ministry of Health, 2017.

Center for Theory of Change: Setting Standards for Theory of Change

National Institute for Health and Care Excellence: NICE impact reports

Useful guidelines

This is a list of guidelines that have published impact plans or have attempted to evaluate the impact of their guideline recommendations:

A National Guideline for the Assessment and Diagnosis of Autism Spectrum Disorders in Australia

Clinical Guidelines for Stroke Management

Evidence-based Clinical Practice Guideline for Deprescribing Cholinesterase Inhibitors and Memantine

National Blood Authority’s Patient Blood Management Guidelines

References

Armstrong, M. J., C. D. Mullins, G. S. Gronseth and A. R. Gagliardi (2018). "Impact of patient involvement on clinical practice guideline development: a parallel group study." Implement Sci 13(1): 55.

Bolton, D. O., D.: O'Connor, E.: Teh, J.: Lawrentschuk, N.: Nzenza, T.: Ranasinghe, W. (2018). "Primary healthcare screening of prostate cancer: The impact of clinical practice guidelines." BJU International 121 (Supplement 1): 27.

Cadilhac, D. A., N. E. Andrew, N. A. Lannin, S. Middleton, C. R. Levi, H. M. Dewey, B. Grabsch, S. Faux, K. Hill, R. Grimley, A. Wong, A. Sabet, E. Butler, C. F. Bladin, T. R. Bates, P. Groot, H. Castley, G. A. Donnan, C. S. Anderson and C. Australian Stroke Clinical Registry (2017). "Quality of Acute Care and Long-Term Quality of Life and Survival: The Australian Stroke Clinical Registry." Stroke 48(4): 1026-1032.

CSIRO (2015). Impact Evaluation Guide. P. E. U. Commonwealth Scientific and Industrial Research Organisation. Canberra, CSIRO.

Guthrie, S., J. Krapels, C. A. Lichten and S. Wooding (2016). 100 Metrics to Assess and Communicate the Value of Biomedical Research: An Ideas Book, RAND Corporation.

Heinemann, A. W., E. J. Roth, K. Rychlik, K. Pe, C. King and J. Clumpner (2003). "The impact of stroke practice guidelines on knowledge and practice patterns of acute care health professionals." Journal of Evaluation in Clinical Practice 9(2): 203-212.

Horning, K. K., J. D. Hoehns and W. R. Doucette (2007). "Adherence to clinical practice guidelines for 7 chronic conditions in long-term-care patients who received pharmacist disease management services versus traditional drug regimen review." Journal of Managed Care Pharmacy 13(1): 28-36.

Inderjeeth, C. A. G., D.: Poland, K.: Ingram, K. (2009). "The impact of an osteoporosis clinical guideline on rates of referral to an osteoporosis clinic." Bone 1): S80.

Ioannidis, J. P. A., S. Greenland, M. A. Hlatky, M. J. Khoury, M. R. Macleod and D. Moher (2014). "Increasing value and reducing waste in research design, conduct, and analysis." Lancet 383.

Kneale, D., J. Thomas and K. Harris (2015). "Developing and Optimising the Use of Logic Models in Systematic Reviews: Exploring Practice and Good Practice in the Use of Programme Theory in Reviews." PLoS One 10(11): e0142187.

Lamb, E. J. M., W. G. (2012). "A decade after the KDOQI CKD guidelines: Impact on clinical laboratories." American Journal of Kidney Diseases 60(5): 719-722.

Mills, T., Lawton, R. & Sheard, L. Advancing complexity science in healthcare research: the logic of logic models. BMC Med Res Methodol 19, 55 (2019). https://doi.org/10.1186/s12874-019-0701-4

NHMRC (2013). Australian Dietary Guidelines National Health and Medical Research Council. Canberra.

Ramanathan, S., P. Reeves, S. Deeming, J. Bernhardt, M. Nilsson, D. A. Cadilhac, F. R. Walker, L. Carey, S. Middleton, E. Lynch and A. Searles (2018). "Implementing a protocol for a research impact assessment of the Centre for Research Excellence in Stroke Rehabilitation and Brain Recovery." Health Research Policy and Systems 16(1): 71.

Tilley, H. B., Louise; Cassidy, Caroline (2018). Research Excellence Framework (REF) impact toolkit. O. D. Institute. http://www.odi.org.

Acknowledgements

NHMRC would like to acknowledge and thank all the developers who submitted comments and met with NHMRC during the Dec 2020 - March 2021 consultation period: Do guidelines make a difference?

NHMRC would also like to acknowledge and thank Dr Alex Aitkin and the members of NHMRC's Health Translation Advisory Committee for their contributions to this module.

Version 3.5

Suggested citation: NHMRC. Guidelines for Guidelines: Guideline impact

https://nhmrc.gov.au/guidelinesforguidelines/develop/guideline-impact. Last published 15 September 2021