Identifying the evidence

Recommendations made in guidelines should be informed by well-conducted systematic reviews

Identifying the evidence

The quality of systematic reviews will depend on the ability of the reviewer to identify as much relevant evidence as possible.

There is an ever increasing body of evidence that can be used to inform guideline recommendations; however, before you consider reviewing this evidence you should determine whether there have been previous attempts to review the literature. Existing reviews and guidelines can save a lot of time and resources as they may address most or all of the questions relevant to your review. These can be found by searching databases such as the Cochrane Database of Systematic Reviews (CDSR) and the Guidelines International Network (G-I-N) library.

Conducting broad searches will ideally capture all relevant evidence, but this process might also identify many studies that are not relevant. You should aim to find a balance between the sensitivity (the proportion of relevant articles retrieved) and specificity (the proportion of irrelevant articles not retrieved) of your search (Montori, Wilczynski, Morgan & Haynes, 2005).

Bibliographic databases of peer-reviewed literature are the primary source of information on which to base a systematic review. There is now a recognised need to include unpublished studies and other non-commercial evidence as they can provide a more comprehensive view of the topic. Additionally, excluding these data can introduce significant bias (Adams, Smart & Huff, 2017). As an example, there is a high incidence of unpublished data for recommendations involving drugs because results from clinical drug trials are sometimes not reported or published (Brænd, Straand, Jakobsen & Klovning, 2016).

Non-commercial and non-peer-reviewed literature is often referred to as ‘grey literature’ and includes government reports, public health monitoring or surveillance data, and data from clinical trials registries. Unpublished evidence, which may not even be in the public domain — such as unpublished industry data or cultural knowledge, can be challenging to identify. Being able to find it may include actively seeking information from individuals or organisations through interviews or written correspondence. If you do decide to seek information outside of bibliographic databases, it is important that these decisions are documented in your search strategy along with how you searched, retrieved and considered this information within your guideline.

You should also be aware that in very specific circumstances — such as if you need to conduct a narrative synthesis as part of your evidence review — other types of evidence relevant for your guideline might not fit within the boundaries of knowledge as defined above. These forms of evidence exist and are equally valid for consideration in an evidence evaluation, although they can’t be subjected to meta-analysis in a formal systematic review process (Popay et al.2006). These forms of evidence might include traditional knowledge or community knowledge and might only be obtained through unconventional search strategies involving discussions with traditional knowledge holders during the guideline development process. These kinds of strategies are not discussed in this module.

Systematic review search methods are currently evolving. Automation is providing a transition from conventional ‘pull’ methods — searching databases to identify studies and then ‘pulling’ them out, to ‘push’ methods. Particularly useful for living systematic reviews, these automated systems search databases using the stored search criteria terms. They then alert the reviewer when a citation that matches the search criteria is found (Thomas et al. 2017). Two automated systems that simplify the search process are the Epistemonikos and Turning Research Into Practice (TRIP) databases. In addition, the ‘My NCBI’ functionality within PubMed allows the reviewer to save searches and set up alerts, thereby speeding up the search process.

Whichever search method you use, the key is to produce a comprehensive search strategy that balances sensitivity and specificity. You need to ensure that your search includes a variety of sources and is accurately recorded for transparency and reproducibility (Bramer, de Jonge, Rethlefsen, Mast & Kleijnen, 2018).

This module focuses on developing search strategies, database navigation, citation management and how best to document the process. Methods for developing inclusion and exclusion criteria, or determining what type of studies you should include are described elsewhere (see the Deciding what evidence to include module). Other modules you might find useful include Forming the questions and Selecting studies.

What to do

1. Plan your search

Before you start planning your evidence search, you need to know what you are looking for. This can be done by first defining the questions (see the Forming the questions module) and determining what types of evidence you would like to include (see the Deciding what evidence to include module).

Your initial search should focus on identifying existing relevant guidelines that may be suitable for adoption or adaptation (see the Adopt, adapt or start from scratch module). An important source of clinical guidelines is the Guidelines International Network (G-I-N) database (membership required). Alternatively, the National Institute for Health and Care Excellence (NICE) Public Health Guidance and advice list and the Australian Department of Health Series of National Guidelines (SoNGs) on communicable diseases are important sources for public health guidelines.

Identified guidelines may address some or all of the questions that will be covered in your guideline. Where some of the questions are not addressed, you should search for existing systematic reviews that may help to fill the gaps. Important sources of systematic reviews include PROSPERO, the Cochrane Database of Systematic Reviews (CDSR), the Trip Database and the Joanna Briggs Institute Database of Systematic Reviews and Implementation Reports.

Where existing guidelines and systematic reviews fail to address all of the questions relevant to your guideline, you will need to conduct an additional search to identify this evidence. The search strategy will consist of two components:

- evidence relating to questions that have not been addressed

- a date-limited search of evidence to update the relevant guideline or systematic review from the time of the most recent search on which it was based.

Conducting a comprehensive search of multiple evidence sources is a complex task and you should avoid developing your own search strategies if you lack experience. Talk to someone who knows what they are doing; for example, Cochrane review authors are prompted to use, or seek advice from, a trials search coordinator (Cochrane Handbook section 6.3.1). Also consider consulting information specialists who are experienced in developing search strategies such as health librarians. If you do not have a systematic reviewer or methodologist within your guideline development group and have sufficient resources, it may be beneficial to commission a systematic review. Consider approaching organisations such as Cochrane Australia, the Joanna Briggs Institute or other health technology assessment (HTA) agencies that may be listed on government panels.

Some key factors to consider when planning a literature search include:

- key words to use in your search

- sources to search; for example, which bibliographic databases or grey literature sources

- any filters you will use, such as language or date limits

- how to manage identified references

- the bibliographic software you will use for managing your references.

In addition to searching for published data and grey literature, you should devise a plan for identifying unpublished data (Wolfe, Gotzsche & Bero, 2013). For example, you might need to identify companies, organisations or individual research groups that have conducted research in the topic area and might not have published the results. You will also need to determine the most appropriate method for contacting them, what information you would like to request and how you might acknowledge this work in your review and final guideline.

2. Determine sources of information

There are a wide range of published and unpublished information sources that can inform a systematic review. The primary published sources are bibliographic databases of peer-reviewed journal articles such as MEDLINE, CINAHL, PsycINFO, EMBASE, Cochrane Central Register of Controlled Trials (CENTRAL) and Global Health (via Ovid) (Table 1).

Grey literature is an increasingly important source of evidence and is defined as any literature or information that is not commercially published or searchable within standard databases. Key sources of grey literature include clinical trials databases, government reports, disease registries, census data, theses and dissertations. A 2008 Cochrane review demonstrated that results from grey literature can significantly affect the outcome of a review (Hopewell, McDonald, Clarke & Egger, 2007). This is due to their increased reporting of negative or inconclusive findings, which generally aren’t included in published studies. In addition, systematic reviews that rely on published evidence miss an average of 64% of data on adverse effects (Golder, Loke, Wright & Norman, 2016).

In addition to bibliographic databases and grey literature, a search for unpublished data should be conducted. This evidence can take many forms, such as clinical trial results, pharmaceutical company data, such as after market adverse event data and cultural knowledge (see Table 1). Primary sources of cultural knowledge might include letters, diaries, taped interviews, photographs and personal journals.

The sources of information that you search will be informed by your topic. For example, if you were conducting a review on treatment options for prostate cancer there would be little use searching TOXNET (a toxicology database) for evidence. However, this database would be integral to any review assessing the environmental health impact of polycyclic aromatic hydrocarbons. Be sure to tailor your search strategy to your topic of interest.

| Source of evidence | Description/Examples |

|---|---|

| Bibliographic databases |

|

| Grey literature |

|

| Unpublished evidence |

|

* The Australian Government funds free access to the Cochrane Library for all Australian residents.

3. Test your search strategy

Developing a comprehensive search strategy is usually an iterative process. Once you have determined where evidence might be available, you can conduct an initial search to expand the key terms. Your research question(s) will outline the population of interest, the target intervention/exposure, the comparator and one or more outcomes (see the Forming the questions module). For example, your objective may be ‘to determine the difference in overall survival and sexual function between active surveillance and active treatment for men with low risk prostate cancer’. The goal is to use these basic terms to identify synonyms and related terms to maximise the retrieval of relevant evidence (Table 2).

Search one or two core databases, for example MEDLINE or EMBASE, using key terms to identify studies related to your topic of interest. Check that the search has identified some relevant articles. These can be reviewed and additional relevant keywords from within the title, abstract or index may be identified. For example, searching ‘active surveillance’ may identify additional terms such as ‘watchful waiting’, ‘active monitoring’ and ‘observation’. You should also search for 'medical subject headings' (MeSH) that are high-level categories used for indexing journal articles within MEDLINE (Table 2). Assessment of the quality of your search strategy is described in the next section.

Your review will generally focus on several outcomes related to your topic of interest. For example, a systematic review may assess safety and effectiveness of chemotherapy for treating liver cancer. This review will include some primary outcomes of interest such as overall survival, response rate and toxicity. However, numerous other secondary outcomes may be reported such as quality of life, incidence of hand-foot syndrome and infection. It is difficult and time consuming to include all possible outcomes in your search strategy. It has also been demonstrated that including adverse events in search terms can fail to identify between 4–8% of relevant studies (Golder, Wright & Rodgers, 2014). Leaving the outcome field blank ensures that studies presenting all relevant outcomes are identified.

Text terms as well as MeSH terms should be used to ensure you capture more recent articles that may not have had MeSH headings assigned to them yet. It should be worth noting that you don’t need to include every element of the PI/ECO framework in the search terms — two may suffice. For example you may only specify the population and intervention/exposure if any comparator(s) and outcomes are of interest.

* The inclusion of outcomes in search strings can reduce the number of relevant studies identified (Golder et al. 2014)

4. Conduct your search

Searching for evidence can be a complex and time consuming process. For instance, many public health guidelines involve a complex topic that often will have numerous scientific questions to seek answers for, for example, guidelines for reducing risk from drinking alcohol, dietary guidelines. If your review focuses on a broad or complex topic which needs a complex search strategy it may be more practical to employ an expert to develop the strategy for you. If you develop your own search strategy, consider asking an information specialist to review your work before beginning the search.

Published literature

Once you have developed a comprehensive list of search terms, the next step is to map them into your target databases. Each database is different. For example, a basic search for articles with prostate cancer and radiotherapy in the title or abstract is structured differently depending on the database searched (Table 3). Following a web-based survey of experts, the Cochrane Collaboration has published a Peer Review of Electronic Search Strategies (PRESS) evidence-based checklist (McGowan et al. 2016). This validated checklist is used to evaluate the quality and completeness of an electronic search strategy, and criteria fall into six categories:

- translation of the research question

- Boolean and proximity operators, which will vary based on the search service

- subject headings, which are database specific

- text word searching, using free text

- spelling, syntax and line numbers

- limits and filters.

Numerous guides have been developed instructing the user how to design comprehensive search strategies using popular databases, for example, PubMed tutorial, Ovid Embase Database Guide, Monash University Database operators. To assist in the development of search strings, Bond University’s Centre for Research in Evidence-Based Practice (CREBP) has developed a Polyglot Search tool. The Polyglot program converts search strings specific to one database into a format applicable to other databases. You should also consider reviewing previous systematic reviews for examples of appropriate search strategies.

You should consider applying filters to your search such as language, date or gender. For example, if you were conducting a review on robotic prostatectomy for prostate cancer you could limit your search to studies after the year 2000 when the first robotic prostatectomy was described (Shuford, 2007). Although applying filters can improve the specificity of your search, care must be taken to avoid excluding relevant studies. Possible exclusion most frequently affects studies published before 1990 due to non-standardised reporting and indexing (Relevo, 2012).

You should also consider hand-searching relevant journals. A Cochrane review demonstrated that 35% of randomised controlled trials identified by hand-searching were not indexed in MEDLINE. The majority of these were reported in meeting abstracts and supplementary articles published prior to 1991 (Hopewell, Clarke, Lefebvre & Scherer, 2007).

Grey literature

Unlike bibliographic databases, there are no single strategies for grey literature searching. Due to the large range of potential sources, searching grey literature can be highly subjective. You need to take great care to ensure that the most important sources of information are checked. The Canadian Agency for Drugs and Technologies in Health has developed a source guide of grey literature, which lists a variety of government and HTA organisations through which grey literature can be searched (Grey Matters). Other popular sources of grey literature include clinical trials databases, such as the Australian New Zealand Clinical Trials Registry, ClinicalTrials.gov; dissertations and theses databases in Proquest or British Library; and the New York Academy of Medicine’s Grey Literature Report.

In addition, guideline development groups and expert committees are also a source of relevant grey literature, with members having a prior history of working on issues that resulted in grey literature reports, or knowing colleagues who have done so.

5. Manage references

When you have conducted your searches and identified references you will need a flexible system to store and sort these references. Citation management software provides a platform where you can record the number of citations identified and where they were identified. This is both important for record keeping and also helps in the screening of articles, which is the next step in developing your review (see the Selecting studies module).

There are several programs available to manage citations, such as Endnote, Refworks, JBI SUMARI, RevMan (Cochrane), Covidence (Cochrane), Mendeley, EPPI Reviewer 4, Zotero and Rayyan. These all differ in cost, functionality and the ability to import and export multiple file types. Many offer a free trial period and you should consider testing a few to determine which one works best for you. When determining which citation management software is right for you consider the following capacity to:

- remove duplicate references

- add full-text and store PDFs

- sort and organise references into sub-folders

- highlight terms of interest

- facilitate duplicate screening

- export the references into multiple formats

- cite-while-you-write (CWYW) to enable easy integration of references into word-processing programs.

Once your search is completed, export all of the identified references to your citation management software. You should then delete duplicate citations before separating them into sub-folders based on information source — for example you can set up one folder for MEDLINE, one for CINAHL. When this process has been completed you are ready to remove duplicates and screen individual references (see the Selecting studies module). Numerous tutorials have been developed to assist users, such as The Little Endnote How-To Book, the Zotero quick start guide, or Getting started with Mendeley Desktop. Several library guides specific to citation management for literature reviews are also available. Cochrane provides extensive instruction on the use of REVMAN and Covidence.

6. Document the process

Thorough documentation of the search process is needed to demonstrate transparency and reproducibility. You should record the following, the:

- databases searched

- exact search strategy employed in each database

- filters used during the search

- exact date(s) of the searches

- number of articles identified within each database.

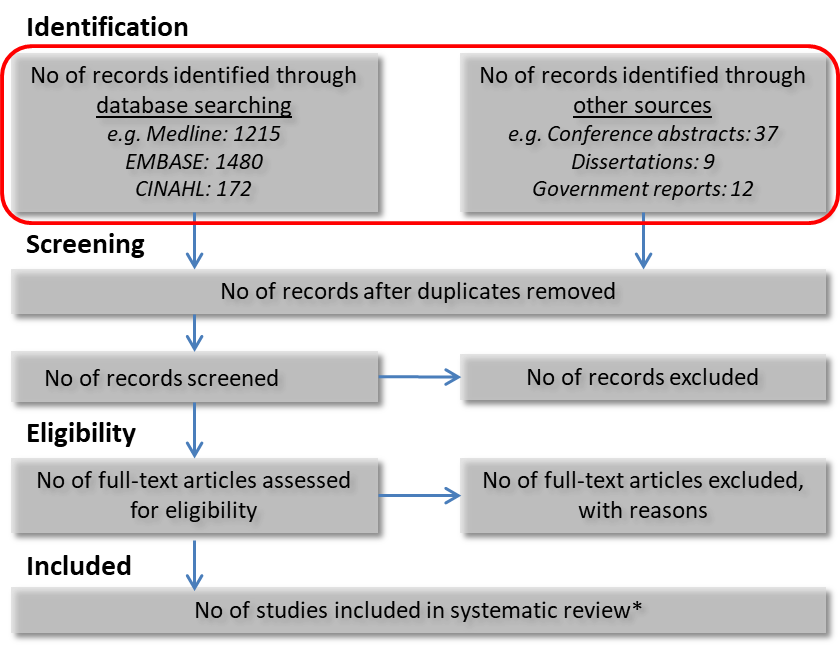

This information makes it both easier to update the review in the future and also provides the basis for the development of a study selection flow diagram. This diagram demonstrates the evidence identification and study selection process and is an important part of reporting for a systematic review. The most widely used method is the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) flow diagram (Figure 1). This method has been refined and now contains several extensions focused on individual patient data, network meta-analysis and equity (see the Equity module). In this figure the red circle represents the first step and lists how many references were identified and where they were identified. This template is used when selecting studies for your evidence synthesis (see the Selecting studies module).

* Number of studies may differ to the number of articles, as multiple articles may present data relating to an individual study

Your review should also document the exact strategies used during the search process. Be sure to present the exact search strings used, the search date, the filters that were used and any other information that improves transparency. The information you provide should facilitate exact replication of your search strategy. These can either be incorporated into the final guideline or be included in a technical document that accompanies the guideline.

7. Prepare to select your articles or studies

After running your searches, the next step is to screen the identified articles to determine whether they meet your inclusion criteria (see the Deciding what evidence to include module). You will likely find that many of the articles will be irrelevant as you sort through them. The correct use of citation management software is an important means of tracking the process. You should develop sub-folders for different categories, such as duplicate references — the same article identified in two or more databases; articles excluded following title/abstract screening; and articles excluded following full-text screening.

Be careful to mark retracted studies in your screening process and consider what impact the exclusion of these studies would have on the risk of bias assessment and your final conclusions.

For more information on the study selection process see the Selecting studies module.

Useful resources

Cochrane Handbook, Chapter 6 (Searching for studies)

Canadian Agency for Drugs and Technologies in Health (CADTH): Peer Review Electronic Search Strategy (PRESS) explanation and elaboration (including checklist and assessment form)

The Joanna Briggs Institute Reviewers Manual: p. 137-164 ‘An introduction to searching’

Automated search database Epistemonikos

Canadian Agency for Drugs and Technologies in Health (CADTH): Grey Matters: a practical tool for searching health-related grey literature

References

Acknowledgements

NHMRC would like to acknowledge and thank Professor Dianne O’Connell from Cancer Council NSW and Associate Professor Zachary Munn from the Joanna Briggs Institute for their contributions to this module as editors.

Version 5.0. Last updated 6 September 2019.

Suggested citation: NHMRC. Guidelines for Guidelines: Identifying the evidence. https://nhmrc.gov.au/guidelinesforguidelines/develop/identifying-evidence. Last published 6 September 2019.